SQL Server and BI/BA With A Smile

Saturday, August 3, 2019

PASS.ORG–MS Renews #SQLSat Sponsorship and More…

The other information worth noting is changes in the By-Laws to get more experienced members on the board. Andy Warren has a good blog post to get you thinking about this change.

With all changes, there are some questions. But, over the years, you can see that some changes stick and some do not. That is what I like about the PASS structure, things get voted in and others voted out.

Please register and attend the PASS Summit this year. There are discount codes from Virtual Groups and Local User Groups

Sunday, February 3, 2019

SQLServerCentral SSAS Tabular Series: 5th Article Cleaning Up Dimensions

Enjoy!!!

Tuesday, November 20, 2018

Power BI World Tour–Dallas Nov 27-29

Please join the Power BI User Community in Dallas on Nov 27th through the 29th for the Power BI World Tour. There will be a one-day workshop on Tuesday and then regular sessions on Wednesday and Thursday.

I am planning to be there and will be presenting on Data Modeling and Intro to DAX. I am really excited to see Adam Sexton and Meagan Longria presenting. They are both great Power BI pros that do an expert job. Amanda Cofsky will be there. I always read her monthly Micrsoft Program Manager updates for Power BI. And from Baton Rouge, my friend Andy Parkenson is presenting as well.

Hope to see you there. If not, Look for upcoming events in Tampa and Chicago in 2019.

Tuesday, September 18, 2018

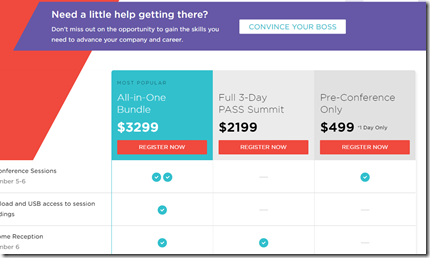

PASSITON discount code for PASS Summit 2018

This week only PASS has a new discount code of PASSITON for an additional $200 off 3-day registration. This is in addition to registration going up next by $200 gives $400 off if registered by Friday.

Also, everyone who registers by Friday will be entered into a daily prize draw for Summit 2018 merchandise including ball caps, shirts, socks and the chance at our grand prize of a pass to Summit 2019. Please read contest rules at pass.org.

https://www.pass.org/summit/2018/RegisterNow.aspx

Saturday, September 8, 2018

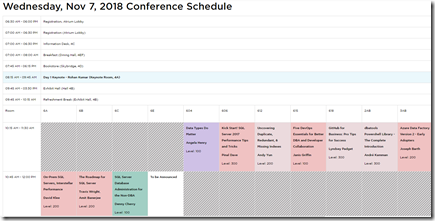

PASS Summit 2018 – Scheduled Released

The “full” PASS Summit 2018 schedule has been released. This includes the regular sessions along with the pre-conference sessions which were released a couple of months ago.

Wednesday thru Friday are 75 minutes, 3 hour and Lighting Rounds (4 15 minute sessions). The options available for going to PASS Summit are nice.

The bundle was a nice addition last year and continues this year.

Here are some of the sessions I look forward to on Wednesday:

David Klee’s On-Prem SQL Servers, Interstellar Performance

Lightning Talks: BI (Paul Turley, Reza Rad, Catherine Wihhelmsen and Chris Webb)

Reeves Smith – Follow Gartner’ Lead, Become a Citizen Data Scientist

I will follow up on the Thursday and Friday sessions in the next blog.

Monday, August 20, 2018

SQLSaturday Orlando–Power BI On-Premises In A Day

Please join me for a day of developing and deploying Power BI reports in an On-Premises environment. This event is a pre-conference session on the Friday before SQLSaturday #801 in Orlando, FL. This is their 12th SQLSaturday and should be a good one. Here is the schedule of the event on Saturday. Remember, the Saturday sessions are FREE!!!

As for the pre-conference Power BI event, there is a charge of $149. Please signup using this link.

Even though the deployed reports are going to be in SQL Server Power BI Reporting Services, there is still a lot of development in Power BI to assist anyone using the Desktop, Azure or On-Premises versions.

Wednesday, July 25, 2018

SQLServerCentral.com Stairway Series–SSAS Tabular

The first 2 articles have been published on a SQLServerCentral.com Stairway series for SQL Server Analysis Tabular models. This is truely a blessing to be able to publish on SQLServerCentral.com. I have been reading this site everyday since I started working in SQL Server 7.0. It has been my go to resource for informative articles.

The first article is Why Use Analysis Service Tabular? This explains what I have seen in the field about SSAS.

The second article is about installing the components for using SSAS Tabular.

The third will build a model from the Wide World Imports DW database.

Hope you enjoy and help me with the uses for Analysis Services Tabular Models.